Mean Resolution Velocity: The Capacity Metric Most IT Teams Aren't Tracking

MRV measures ticket resolution throughput, not just duration — and declining MRV is the earliest signal of IT capacity erosion.

FIELD_REPORT // MRV-001 // CLASSIFICATION: CAPACITY DIAGNOSTIC

Mean Resolution

Velocity

The Capacity Metric Most IT

Teams Aren't Tracking

MTTR measures the speed of a single car.

MRV measures the throughput of the highway.

Rate, not duration. Trajectory, not snapshot.

Unplanned Work Ratio

Top 10% of IT Organizations

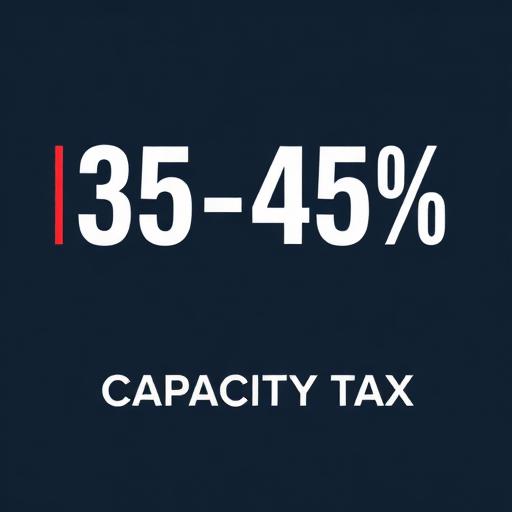

Industry median: 35-45%

Source: DORA 2025 / HDI Benchmarks

Diagnostic Triad

Capacity Degradation Sequence

Stage 1: Absorption — MRV declines, MTTR looks fine

Stage 2: Silent backlog — leadership sees green

Stage 3: Visible degradation — too late to recover

Mean Resolution Velocity (MRV) measures the rate at which weighted tickets are resolved per unit of time — not individual ticket duration. A declining MRV is the earliest measurable signal of IT capacity erosion, appearing months before MTTR rises. The Allari Stability Standard, verified over a 27-month longitudinal study, maintains a 1.77-day closing velocity against a 16.42-day pre-stabilization baseline.

Most IT operations teams track how long tickets take to close. Almost none track how fast work is actually moving through the system. That distinction — between duration and velocity — is the difference between diagnosing a capacity problem after it has already cost you a quarter, and catching it while you still have time to intervene.

MTTR Tells You Duration. MRV Tells You Velocity.

Mean Time to Resolution (MTTR) answers a single question: how long did this ticket take? It is backward-looking by design. You calculate it after the fact, per ticket, and it gives you a snapshot of individual resolution performance.

Mean Resolution Velocity (MRV) answers a different question: how fast is work moving through the system right now?

The difference matters. A team can hold acceptable MTTR on every individual ticket while MRV is declining — because the volume of work reaching resolution is dropping even though each ticket that does get closed still looks fine on paper. Think of it this way: MTTR measures the speed of a single car. MRV measures the throughput of the highway. You can have every car doing 65 mph and still have a traffic jam.

When MTTR stays flat but MRV drops, the backlog is growing. Tickets are stacking up faster than they are being closed. If you are only watching MTTR, you will not see this until ticket aging spikes weeks later — by which point the damage is structural, not operational.

What MRV Actually Measures

MRV quantifies tickets resolved per unit of time, normalized for complexity and priority weighting. The basic calculation:

MRV = Weighted Tickets Resolved / Time Period

Where weighted tickets account for severity distribution (a P1 incident carries more resolution weight than a P4 cosmetic request). This normalization is critical. Raw ticket counts reward teams for cherry-picking low-effort work. MRV, properly constructed, measures whether the team is moving real work through to resolution — not just running up a count on trivial items.

We use the median rather than the mean for the underlying resolution times that feed MRV. Averages are susceptible to outlier distortion — one 45-day ticket can mask an otherwise healthy system, or one fast close can disguise a degrading one. The median gives you the most accurate representation of typical operational performance.

The output is a rate, not a duration. And rates trend. That is what makes MRV useful: it gives you a trajectory, not just a data point.

ITIL Alignment: Throughput of Effective Change

MRV is not a new framework. It is a throughput lens applied to metrics that ITIL 4 and DORA already recognize as foundational.

ITIL 4's Change Enablement practice tracks change success rate — the percentage of changes implemented without causing unplanned outages, incidents, or rollbacks. DORA's performance model explicitly categorizes throughput (how many changes move through the system over time) alongside stability (how well those changes hold). Both frameworks treat speed and quality as correlated, not competing. The research is consistent: teams that move more changes through their systems also have fewer failures.

Where MRV adds value is in connecting these principles to the operational layer most ITIL implementations overlook — the day-to-day ticket queue where capacity actually lives. Change success rate tells you whether changes are holding. MRV tells you whether you have enough throughput to get changes into the queue in the first place. An organization can have a 95% change success rate and still be failing strategically if MRV is declining — because the changes that are succeeding represent a shrinking fraction of the changes that need to happen.

This is what we mean by throughput of effective change. It is not enough to succeed at the changes you attempt. You have to measure how many changes you are actually getting through the system relative to the demand. A team that successfully completes 20 changes per month with a 95% success rate is not outperforming a team that completes 60 changes per month with an 88% success rate — not if the first team has 80 changes in its backlog and the second team has 10.

Throughput of effective change = MRV × Change Success Rate.

Both numbers have to be healthy for the system to be healthy.

Why MRV Is a Capacity Indicator

This is where MRV earns its place in the diagnostic stack.

Declining MRV is often the first measurable signal that a team is saturated. Here is the typical sequence we observe in enterprise IT environments:

Unplanned work begins rising toward the 35–45% range that consumes most IT organizations. The team absorbs it. Individual ticket resolution times stay within SLA. MTTR looks fine. But the team is resolving fewer tickets per week because more time is being consumed by context-switching, re-prioritization, and interrupt-driven work. MRV begins to decline.

MRV continues to drop. Ticket aging creeps up, but not yet at alarming levels. The team is still closing everything they touch — they are just touching less. Leadership sees green on the MTTR dashboard and assumes operations are healthy.

MTTR finally rises. Ticket aging is now measurable. By this point, the team has been saturated for weeks, sometimes months. The capacity erosion is now structural — you cannot fix it by working harder or adding overtime. You need to recover the capacity that unplanned work consumed.

The gap between Stage 1 and Stage 3 is the diagnostic window that MRV gives you. Rising MTTR is a lagging indicator. Declining MRV is a leading one. If you are not tracking MRV, you are seeing capacity erosion after the damage is done.

Our verified benchmark — a 1.77-day closing velocity maintained over a 27-month longitudinal study — was built on this principle. We do not just measure how fast individual tickets close. We measure whether the rate of resolution is holding, accelerating, or decelerating across the entire operational surface.

What Good Looks Like: The Top 10%

A healthy MRV is not a single number. It is a trend line. But the benchmarks help frame what high-performing IT organizations actually achieve.

According to the 2025 DORA report, only 8.5% of teams achieve a change failure rate between 0–2% — meaning 98–100% of their changes succeed without causing incidents or requiring rollback. These are the elite performers. Earlier DORA research placed elite teams at a 5% change failure rate (95% success), with high performers at 80% success and medium performers at 90%.

| Metric | Industry Median | Top 10% |

|---|---|---|

| Change Success Rate | 75–80% | 95–99%+ |

| Change Failure Rate (DORA) | 10–15% | 0–2% |

| MTTR | 8.5+ hours (HDI) / 16+ days (mid-market ERP) | < 2 hours / < 2 days |

| First Contact Resolution | 70–74% | 84%+ |

| Resolution SLA Compliance | 90–93% | 96–98% |

| Unplanned Work Ratio | 35–45% | < 20% |

| Deployment/Change Frequency | Monthly or quarterly | Multiple times per week or on demand |

The pattern is consistent across both DORA and ITIL benchmarks: the top performers are not choosing between speed and stability. They are excelling at both. They deploy more frequently, fail less often, resolve faster, and sustain higher throughput. These are not teams with more people. They are teams with more available capacity — because they have systematically reduced the unplanned work that consumes everyone else.

That last row — unplanned work ratio — is the one that connects MRV to the rest of the stack. When unplanned work drops below 20%, teams gain enough capacity headroom to sustain high MRV even as demand fluctuates. When it sits at 35–45%, every fluctuation in demand triggers the three-stage degradation sequence described above.

The Red Flag: Three Metrics, Not One

MRV alone is necessary but not sufficient. If an organization is tracking resolution throughput but not tracking the quality and composition of what is driving that throughput, the picture is incomplete.

The diagnostic triad we look for:

Mean Resolution Velocity (MRV) — is work moving through the system at a stable or improving rate?

Change Success Rate — are the changes actually holding, or are they generating rework and reopens? This is the ITIL 4 Change Enablement KPI that most organizations track but few connect back to throughput.

Unplanned Work Ratio — what percentage of capacity is being consumed by reactive work versus planned execution?

These three together form an early warning system. MRV tells you the system is decelerating. Change success rate tells you whether the deceleration is coming from rework — failed changes creating new incidents that consume the capacity that should be going to the next change. Unplanned work ratio tells you whether the deceleration is coming from capacity displacement — run-state operations crowding out planned work before it even starts.

Without all three, you are diagnosing with incomplete data.

Organizations that track only MTTR and call it sufficient are watching a single vital sign and assuming the patient is healthy. An acceptable heart rate does not rule out organ failure.

The Practical Takeaway

The teams that track MRV catch capacity problems months before the teams that only track MTTR. That lead time is the difference between adjusting workload allocation in a planning cycle and scrambling to explain why the roadmap slipped after the quarter closes.

MRV is not a new invention. It is a reframing of data most IT organizations already collect — ticket volumes, resolution times, severity distributions — into a throughput metric that actually reveals system health. The data is already in your ITSM platform. The question is whether anyone is reading it as velocity instead of duration.

If your resolution times look fine but your backlog keeps growing, you do not have a speed problem. You have a throughput problem. MRV is how you see it before it sees you.

Metrics Don't Fix Dysfunction. Leadership Does.

We've spent this entire article talking about the physics of throughput, the diagnostic triad, and why Mean Resolution Velocity (MRV) is your ultimate early warning system. But let's be brutally honest for a second.

You can have the most beautiful, mathematically perfect MRV dashboard in the world. You can track your Unplanned Work Ratio down to the decimal. But metrics don't fix systemic dysfunction. Leadership does.

I've read countless posts pitching the latest framework or toolset to "fix" IT execution. But the true fix — the one that actually separates the teams achieving a 1.77-day resolution velocity from the rest of the pack — comes down to strong leadership at the CEO or CIO level establishing and fiercely protecting a culture of causality.

You can pay for all the ITIL or Agile certifications in the world, but certifications don't change behavior. A firm culture of causality will eat those frameworks for lunch every single time.

Having a culture of causality means that when your MRV starts to silently drop and your team enters "Capacity Absorption", you don't just authorize overtime or blindly reboot servers to see if the problem magically goes away. Instead, your teams rigorously trace the failure back to its root cause.

But this operational shift doesn't happen organically. Culture is completely downstream from leadership. It requires the "tone at the top" to enforce strict accountability, declaring that the only acceptable number of unauthorized changes is zero. When leadership holds that line, stops rewarding "heroes" who just put out fires, and instead demands root-cause problem solving, it creates a discipline that ripples through the entire organization.

MRV is the ultimate diagnostic tool to tell you when your operations are experiencing organ failure. But it is a culture of causality that actually cures the patient.

Sources

- DORA State of DevOps Report 2025 — Google Cloud / DORA Team

- HDI Support Center Benchmarks (2024–2025)

- Freshservice IT Service Management Benchmark Report 2024

- ITIL 4 — Change Enablement Practice Guide (AXELOS / PeopleCert)

The State of IT Capacity: 2026 Benchmark Report

35–45% of enterprise IT labor capacity is consumed by unplanned, reactive work. 27 years of forensic data across 62 Fortune 500 environments.

Related Reading

The Capacity Lie

Why hiring more engineers won't fix your operations

What Is Execution Capacity?

IT bandwidth definition, formula, and benchmarks

What Is IT Capacity Recovery?

How to recover the bandwidth consumed by unplanned work

IT Capacity Loss Index

Quantifying where enterprise IT capacity disappears

The Ticket Aging Problem

Why your backlog keeps growing despite resolved tickets

Related Intelligence

Capacity Recovery · 10 min read

The Capacity Lie: Why Hiring More Engineers Won't Fix Your Operations

The 2025 SRE Report exposes the truth: despite record investments, operational toil has risen. You don't have a people problem — you have a physics problem.

Capacity Recovery · 12 min read

2025 SRE Report Analysis: What Google's Data Reveals About IT Capacity

A forensic breakdown of the 2025 State of SRE report — and what it means for enterprise IT organizations still running legacy operations models.

Platform Modernization · 15 min read

Why Your JD Edwards System Is Lacking the Capacity You Think It Has

Your JDE team appears to have bandwidth — until you task them. Discover where capacity disappears in JDE environments.

Mean Resolution Velocity (MRV) — FAQ

Common questions about MRV, how it differs from MTTR, and what benchmarks to target.

Measure Your Team's Resolution Velocity

Execution Capacity Partners

EST. 1999

Request Executive Diagnostic